There have been major changes in technology trends in the last decade. Digital transformation has accelerated the migration of enterprise applications and workloads from traditional data centers to public cloud.

The applications are available everywhere and can be accessed from anywhere. The 4G/5G technology and cost-effective Internet circuits have enabled users to work from anywhere. The rigid networks of the past do not work in the new digital economy. Traditional WAN architectures weren't built to support cloud applications.

These changes have also brought in new challenges. Internet connections are not inherently secure and here is an increase in the usage of BYOD (Bring your own device) which has resulted in the increased attack surface of corporate networks.

Standard hardware based product life cycle is getting replaced by usage based subscriptions. Businesses are moving from permanently fixed infrastructure to on-demand cloud services.

Enterprises are looking to extend their security perimeter all the way to the user and provide enhanced user experience and visibility into application performance and usage.

This is where the SASE architecture comes into play. It contains five major components as below.

1) Software-Defined Wide Area Network (SDWAN) : Simplifies IT infrastructure control and management by building a virtual WAN over public and private network that securely connects users to their applications.

2) Secure Web Gateway (SWG) : Provides granular control and visibility to web traffic and enforce appropriate corporate security policies.

3) Cloud Access Security Broker (CASB) : Helps to manage and protect corporate data that is stored in the cloud.

4) Zero Trust Network Access (ZTNA) : Connects distributed users with distributed applications without compromising on security or user experience.

5) Firewall-as-a-Service (FWaas) : Cloud service that provides advanced security to the infrastructure, applications, and platforms managed and hosted by an organization in its cloud infrastructure.

We will now look into each of these components in detail.

SDWAN:

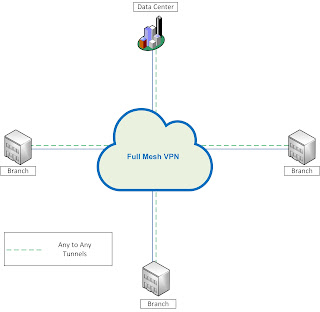

In the traditional networks, service providers would give customer a link at each of their locations which would enable any to any full mesh connectivity. The service providers would use VPN technology to create tunnels between various end points, appear to be directly connected to the remote customer devices.

The internet breakout in such a network would be from a central location or from a specific branch office.

Key challenges with this architecture are

- Expensive Bandwidth : The MPLS circuits normally cost more than Internet access. If a branch office has two circuits implemented as active/passive, the backup circuit would seat idle until the primary link fails. It is difficult to achieve load sharing between redundant links.

- Failover : In an active/passive setup, failover is completely dependent upon the state of the link (up/down).

- Control : Configuration is done locally on each individual router. Any policy change would require manual change on each device.

- Visibility : There is a very little application level visibility with such architecture. One has to rely on external tools to get necessary application data.

The SDWAN solution has the following key components

Management : Centralized management, configuration and monitoring

- Single Pane of glass

- Configuration creating and management

- Centralized deployment system

- Simplified on-boarding process

Control Plane: Distribution of reachability of information

- Tunnel creation

- Route advertisement

- Auto-discovery

- Topology management

Data Plane: Data Transport

- Tunneling and encapsulation

- Encryption

- Data forwarding and path selection

- Implementation of the security process

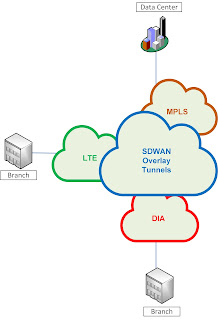

SDWAN can utilize any underlay transport such as MPLS, DIA or LTE to build overlay tunnels for enterprise traffic. Customer can choose which physical path to use based on path properties, security policies, application type, user groups, path stability etc.

We will look at the remaining components of SASE architecture in second part of the blog.